The Curse of Regional Traffic in Write Intensive ClickHouse® Applications

Running ClickHouse across availability zones on AWS, GCP, or Azure? Reduce cross zone network costs with zone aware load balancing, Keeper, and Kafka.

Running ClickHouse across availability zones on AWS, GCP, or Azure? Reduce cross zone network costs with zone aware load balancing, Keeper, and Kafka.

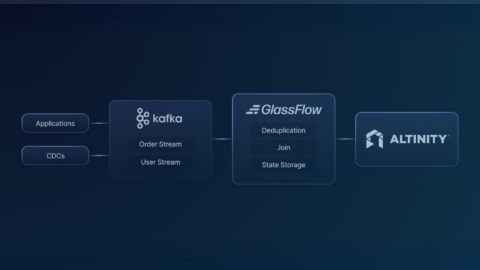

GlassFlow and Altinity.Cloud together offer an end-to-end, low-ops path to real-time analytics with Kafka and ClickHouse. Learn how to set it up here.

ClickHouse is the world’s most powerful open-source analytics database. Apache Kafka is the world’s most popular open-source distributed event-streaming platform. With their powers combined, you’ve got an incredible combination for real-time analytics.

In this article, we give an overview of the Kafka engine in ClickHouse and propose a list of improvements that should make it even better.

Learn how to configure Kerberos for Kafka and ClickHouse to benefit from a centralized authentication and authorization service. This article explains how to deploy Kafka, Zookeeper and Clickhouse and configure them to authenticate via Kerberos showcasing the process with a…

Want fast MySQL analytics? Then check out the Altinity Sink Connector for ClickHouse. Merging the power of ClickHouse with MySQL, the sink connector lets you replicate data from MySQL to ClickHouse in real-time.

eBay depends on Kafka to solve the impedance mismatch between rapidly arriving messages in event streams and efficient block insert into ClickHouse clusters. Naïve loading procedures from Kafka to ClickHouse generate non-deterministic blocks, leading to data loss and incorrect results…

Our colleague Mikhail Filimonov just published an excellent ClickHouse Kafka Engine FAQ. It provides users with answers to common questions about using stable versions, configuration parameters, standard SQL definitions, and many other topics. Even experienced users are likely to learn…

Kafka is a popular way to stream data into ClickHouse. ClickHouse has a built-in connector for this purpose — the Kafka engine. This article collects typical questions that we get in our support cases regarding the Kafka engine usage. We…

Important notice for our beloved Apache Kafka users. We continue to improve Kafka engine reliability, performance and usability, and as a part of this entertaining process we have released 19.16.12.49 Altinity Stable ClickHouse release. This release supersedes the previous stable…

Apache Kafka is a popular way to load large data volumes quickly to ClickHouse. In this webinar we will cover best practices for integrating Kafka and ClickHouse including setup of Kafka clusters, defining materialized views to pull data into ClickHouse,…

We use cookies to enhance your experience. By consenting, we can process data like browsing behavior or unique IDs. Without consent, some features may not work as expected.