Introducing EventNative | an easy way to put your event stream into ClickHouse®

Guest post from Vladimir Klimontovich at Jitsu (formerly kSense)

Editor’s Note. The Jitsu team are maintainers of the EventNative project on Github, which moves event records efficiently into data warehouses like ClickHouse. We’re delighted to share their intro to EventNative on the Altinity Blog. Contact us at info@altinity.com if you have new technology to share with the ClickHouse community. Please know, that shortly after we first published this article, the team went through a brand update and changed from kSense to Jitsu.

Data has become an invaluable asset that helps companies understand users, predict behavior, and identify trends. EventNative, an open-source project by Jitsu is designed to simplify event data collection. EventNative supports a few data warehouses as storage backends, and ClickHouse is one of them.

This article shows how to set up EventNative with ClickHouse and gives operational advice on how to achieve the best performance and reliability

Getting data to ClickHouse is not as easy a task as it seems. Streaming millions of events from different applications where each event has its own structure can be very challenging. Things can become much more complicated when different versions of the same application are running in production (such as the different versions of iOS app).

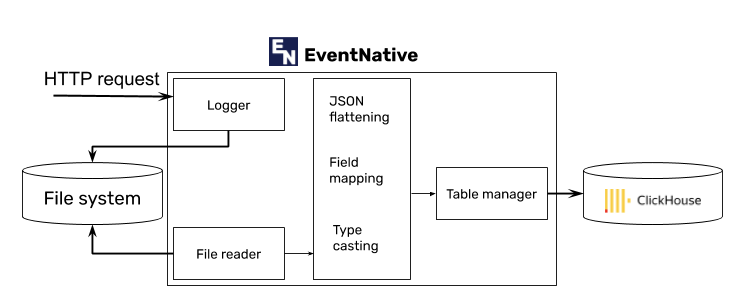

EventNative’s architecture is very efficient and robust. It consists of a lightweight HTTP server that accepts an incoming event-stream (JSON objects) and buffers it to local-disk. A separate thread takes care of processing the buffer, mapping JSON to ClickHouse tables, adjusting the schema, and storing the data.

ClickHouse and EventNative quick-start

In this section we’ll configure a single node installation of ClickHouse and EventNative using official Docker images.

Note that this is a dev setup to get things going. In production scenarios you would want to deploy multiple EventNative nodes and to enable ClickHouse replicas to ensure availability of data as well as scale throughput.

1. Pull latest Docker images

docker pull ksense/eventnative:latest && docker pull yandex/clickhouse-server:latest

2. Start ClickHouse

mkdir ./clickhouse_data docker run --name clickhouse-test -p 8123:8123 \ -v $PWD/clickhouse_data:/var/lib/clickhouse yandex/clickhouse-server

3. Configure EventNative

Put the following content to ./eventnative.yaml

server: auth: - server_secret: 'ia7i92rqp3mh' # access token. We will need it later for sending events through HTTP API destinations: clickhouse: mode: stream clickhouse: dsns: - "http://default:@host.docker.internal:8123?read_timeout=5m&timeout=5m" db: default data_layout: mapping: - "/field_1/sub_field_1 -> " #removing field rule - "/field_2/sub_field_1 -> /field_10/sub_field_1" #renaming field rule - "/field_3/sub_field_1/sub_sub_field_1 -> (timestamp) /field_20" #renaming field and type casting rule

Also, create a directory for logs.

mkdir ./eventnative-logs

4. Start EventNative

docker run -d -t --name eventnative-test -p 8001:8001 \ -v $PWD/eventnative.yaml:/home/eventnative/app/res/eventnative.yaml \ -v $PWD/eventnative-logs:/home/eventnative/logs/events/ ksense/eventnative:latest

5. Send test event and check that it landed in ClickHouse

Put the following JSON to ./api.json:

{

"eventn_ctx": {

"event_id": "19b9907d-e814-42d8-a16d-c5da51e01531

},

"field_1": {

"sub_field_1": "text1",

"sub_field_2": 100

},

"field_2": "text2",

"field_3": {

"sub_field_1": {

"sub_sub_field_1": "2020-09-25T12:38:27.763000Z"

}

}

}Run the following command:

curl -X POST -H "Content-Type: application/json" -d @./api.json \ 'http://localhost:8001/api/v1/s2s/event?token=ia7i92rqp3mh' echo 'SELECT * FROM events;' | curl 'http://localhost:8123/' --data-binary @-

You’ll see one event in the database. The test worked!

6. Test event buffering

One of the core features of EventNative is event buffering. Events are written to an internal queue with disk persistence. If a destination (ClickHouse in our case) is down, data won’t be lost! It will be kept locally until ClickHouse is up again.

Let’s test this feature.

Put the following JSON to ./api2.json:

{

"eventn_ctx": {

"event_id": "4748c7bb-50d4-43a7-91b4-21a5bcccb12e"

},

"field_1": {

"sub_field_1": "text1",

"sub_field_2": 100

},

"field_2": "text2",

"field_3": {

"sub_field_1": {

"sub_sub_field_1": "2020-09-25T12:38:27.763000Z"

}

}

}Now let’s test buffering.

1. Shutdown ClickHouse:

docker stop clickhouse-test

2. Send an event:

curl -X POST -H "Content-Type: application/json" -d @./api2.json 'http://localhost:8001/api/v1/s2s/event?token=ia7i92rqp3mh'

3. Verify that ClickHouse is down:

echo 'SELECT * FROM events;' | curl 'http://localhost:8123/' --data-binary @-

4. Start ClickHouse again:

docker start clickhouse-test

5. Wait for 60 seconds, then verify that event hasn’t been lost:

echo 'SELECT * FROM events;' | curl 'http://localhost:8123/' --data-binary @-

If you see the event on the last step, the test succeeded.

Schema management with EventNative and ClickHouse

EventNative is designed to be a schema-less component in your stack. This means you don’t have to create table schemas and maintain them in advance. EventNative takes care of it automatically! Each incoming JSON field will be mapped to a SQL field. If the field is missing, it will be automatically created with ClickHouse.

It’s particularly useful when one engineering team is in charge of event structure, and another team operates ClickHouse. As an example: a frontend developer may start sending very simple data to track product page views (product_id and price), and add more sophisticated fields later (currency, images). It’s nice to have.

Example:

Event JSON

{

"product_id": "1e48fb70-ef12-4ea9-ab10-fd0b910c49ce",

"product_price": 399.99,

"price_currency": "USD"

"product_type": "supplies"

"product_release_start": "2020-09-25T12:38:27.763000Z"

"images": {

"main": "picture1"

"sub": "picture2"

}

}Automatically created table structure

"product_id" Nullable(String), "product_price" Nullable(Float64), "price_currency" Nullable(String), "product_type" Nullable(String), "product_release_start" Nullable(DateTime), "images_main" Nullable(String), "images_sub" Nullable(String)

By default, all fields will be created as Nullable. However, Nullable fields have a performance overhead. To avoid that, it’s possible to list non-nullable fields in a mapping configuration. The following configuration will make schema look like (“product_id” String, “product_price” Float64, “price_currency” String, …)

non_null_fields: ['product_id', 'product_price', 'price_currency']

The next version of EventNative will be able to create all fields as non-nullable by default.

Mapping configuration details

EventNative can be configured to apply particular transformations to incoming JSON objects such as:

- Remove fields

- Rename fields (including moving element to another node)

- Explicitly defining the type of the node

Example:

rules: - "/field_1/subfield_1 -> " #removing field rule - "/field_2/subfield_1 -> /field_10/subfield_1" #renaming field rule - "/field_3/subfield_1/subsubfield_1 -> (timestamp) /field_20" #renaming field and type casting rule

Source

{

"eventn_ctx": {

"event_id": "19b9907d-e814-42d8-a16d-c5da51e01530"

// this field indicates a unique id

},

"field_1": {

"sub_field_1": "text1",

"sub_field_2": 100

},

"field_2": "text2",

"field_3": {

"sub_field_1": {

"sub_sub_field_1": "2020-09-25T12:38:27.763000Z"

}

}

}Mapped and flattened JSON

{

"eventn_ctx_eventn_id": "19b9907d-e814-42d8-a16d-c5da51e01530",

"field_1_sub_field_1": "text1",

"field_1_sub_field_2": 100,

"field_2": "text2",

"field_3_sub_field_1_sub_sub_field_1": "2020-09-25T12:38:27.763000Z"

}See a full description of this feature in the documentation.

Performance Tips

ReplacingMergeTree (or ReplicatedReplacingMergeTree) is the best choice for data produced by EventNative. Here’s why:

- Usually, data produced by EvenNative is used in aggregated queries, such as the number of events per period satisfying filtering conditions. MergeTree engine family shows great performance for aggregation queries.

- ReplacingMergeTree (unlike ordinary MergeTree) has a nice side-effect of data deduplication. Often, mistakes are found in data after it has been loaded. Sometimes, a replay is required. Since EventNative can optionally keep a copy of data locally for a while, it’s possible to write a script to fix data and send it to EventNative once again. ReplacingMergeTree will avoid data duplication provided each event has a unique id and the id is used as a key.

If the destination table is missing, EventNative will create the table with ReplacingMergeTree or ReplicatedReplacingMergeTree if cluster size is greater than 1. However, it’s possible to configure the engine manually. Please, read more about table creation in the documentation.

Learning More

EventNative is an open-source project maintained by Jitsu. Jitsu makes it easy to capture data from your web apps, mobile apps, physical devices and SaaS platforms at scale on your own environment. If you need help getting EventNative setup feel free to send us a ping at: team@eventnative.com

ClickHouse® is a registered trademark of ClickHouse, Inc.; Altinity is not affiliated with or associated with ClickHouse, Inc.